I just can’t resist this one. Vista Equity Partners is paying around $100 million less than expected for Tibco Software Inc because Goldman Sachs got the number of shares wrong in the spreadsheet that did all the calculations. OK, $100 million isn’t much in the context of a $4 billion deal, but it’s an awful lot of money in any other context. But only just over twice Goldman’s fees to Tibco for the transaction. It’s not clear how the mistake arose.

Category: Software

… but some are more wrong than others. It’s emerged that a risk calculator for cholesterol-related heart disease risk is giving some highly dubious results. So completely healthy people could start taking unnecessary drugs. It’s not clear if the problem is in the specification or the implementation: but either way, the results seem rather dubious.

The answer was that the calculator overpredicted risk by 75 to 150 percent, depending on the population. A man whose risk was 4 percent, for example, might show up as having an 8 percent risk. With a 4 percent risk, he would not warrant treatment — the guidelines that say treatment is advised for those with at least a 7.5 percent risk and that treatment can be considered for those whose risk is 5 percent.

According to the New York Times (may be gated), questions were raised a year ago, before the calculator was released, but somehow the concerns weren’t passed on to the right people. It’s difficult to tell from a press article, but it appears as if those responsible for the calculator are reacting extremely defensively, and not really admitting that there’s anything wrong with the model.

while the calculator was not perfect, it was a major step forward, and that the guidelines already say patients and doctors should discuss treatment options rather than blindly follow a calculator

Of course they’re right, in that you should never believe a model to the exclusion of all other evidence, but it’s very difficult for non-experts not to. Somehow, something coming out of a computer always seems more reliable than it actually is.

I’ve been recently been working with the Centre for Risk Studies in Cambridge on some extreme scenarios: one-in-200, or even less likely events. It’s been an interesting challenge, not least because it’s very difficult to make things extreme enough. We find ourselves saying that the step in the scenario that we’re working would never actually happen, because not everything would go wrong in the right way. But of course that’s just the point: we’re looking at swiss cheese situations.

A couple of times we’ve dreamt up something that we thought was really unlikely, only for something remarkably similar to turn up in the news. We came up with the idea that data could be irretrievably corrupted, and a few days later found ourselves reading about a Xerox copier that irretrievably corrupted the images.

So I was really interested to read a story about a security researcher who’s apparently found a really nasty piece of malware — except it’s not clear if he’s making the whole thing up.

People following this story fall into a few different camps. Many believe everything he says — or at least most of it — is true. Others think he’s perpetrating a huge social engineering experiment, to see what he can get the world and the media to swallow. A third camp believes he’s well-intentioned, but misguided due to security paranoia nurtured through the years.

The thing is, the individual pieces of the scenario are all just about possible. But is it possible for them all to happen in a connected way? For the holes in the swiss cheese to line up?

The absolutely amazing thing about this story is that nearly everything Ruiu reveals is possible, even the more unbelievable details. Ruiu has also been willing to share what forensic evidence he has with the public (you can download some of the data yourself) and specialized computer security experts.

Where developments start getting preposterous, no matter how much leeway you give him, is how many of the claims are unbelievable (not one, not two, but all of them) and why much of the purported evidence is supposedly modified by the bad guys after he releases it, thus eliminating the evidence. The bad guys (whoever they are) are not only master malware creators, but they can reach into Ruiu’s public websites and remove evidence within images after he has posted it. Or the evidence erases itself as he’s copying it for further distribution.

Again, this would normally be the final straw of disbelief, but if the malware is as devious as described and does exist, who’s to say the bad guys don’t have complete control of everything he’s posting? If you accept all that Ruiu is saying, there’s nothing to prove it hasn’t happened.

I don’t know. I haven’t looked into the details at all, and probably wouldn’t understand them even if I did. But there’s certainly a lesson here for those of us developing unlikely scenarios: it’s difficult to make things up that are more unlikely than things that actually happen.

Swiss cheese

Why do things go wrong? Sometimes, it’s a whole combination of factors. Felix Salmon has some good examples, and reminded me of one of my favourite metaphors: the Swiss cheese model of accident causation.

In the Swiss Cheese model, an organization’s defenses against failure are modeled as a series of barriers, represented as slices of cheese. The holes in the slices represent weaknesses in individual parts of the system and are continually varying in size and position across the slices. The system produces failures when a hole in each slice momentarily aligns, permitting (in Reason’s words) “a trajectory of accident opportunity”, so that a hazard passes through holes in all of the slices, leading to a failure.

It’s a lovely vision, those little accidents waiting to happen, wriggling through the slices of cheese. But as Salmon points out

… it’s important to try to prevent failures by adding extra layers of Swiss cheese, and by assiduously trying to minimize the size of the holes in any given layer. But as IT systems grow in size and complexity, they will fail in increasingly unpredictable and catastrophic ways. No amount of post-mortem analysis, from Congress or the SEC or anybody else, will have any real ability to stop those catastrophic failures from happening. What’s more, it’s futile to expect that we can somehow design these systems to “fail well” and thereby lessen the chances of even worse failures in the future.

Which reminds me of Tony Hoare‘s comment on complexity and reliability

There are two ways of constructing a software design: One way is to make it so simple that there are obviously no deficiencies, and the other way is to make it so complicated that there are no obvious deficiencies. The first method is far more difficult.

The right tool?

The next time you notice something being done in Excel where you work, take a moment to question whether it’s the right tool for the job, or whether you or someone in your organisation is a tool for allowing its use.

No, not my words, but from the FT’s consistently excellent Alphaville blog. The point is, it’s easy to use Excel. But it’s very hard to use Excel well.

There are many people out there who can use Excel to solve a problem. They knock up a spreadsheet with a few clicks of the mouse, some dragging and dropping, a few whizzo functions, some spiffy charts, and it all looks really slick. But what if anything needs to be changed? Sensitivity testing? And how do you know you got it right in the first place? Building spreadsheets is an area in which people are overconfident of their abilities, and tend to think that nothing can go wrong.

Instead of automatically reaching for the mouse, why not Stop Clicking, Start Typing?

But we won’t. There’s a huge tendency to avoid learning new things, and everyone thinks they know how to use Excel. The trouble is, they know how to use it for displaying data (mostly), and don’t realise that what they are really doing is computer programming. A friend of mine works with a bunch of biologists, and says

I spend most of my time doing statistical analyses and developing new statistical methods in R. Then the biologists stomp all over it with Excel, trash their own data, get horribly confused, and complain that “they’re not programmers” so they won’t use R.

But that’s the problem. They are programmers, whether they like it or not.

Personally, I don’t think that things will change. We’ll keep on using Excel, there will keep on being major errors in what we do, and we’ll continue to throw up our hands in horror. But it’s rather depressing — it’s so damned inefficient, if nothing else.

There’s a bit of a furore going on at the moment: it turns out that a controversial paper in the debate about the after-effects of the financial crisis had some peculiarities in its data analysis.

Rortybomb has a great description, and the FT’s Alphaville and Tyler Cowen have interesting comments.

In summary, back in 2010 Carmen Reinhart and Kenneth Rogoff published a paper Growth in a time of debt in which they claim that “median growth rates for countries with public debt over 90 percent of GDP are roughly one percent lower than otherwise; average (mean) growth rates are several percent lower.” Reinhart and Rogoff didn’t release the data they used for their analysis. Since then, apparently, people have tried and failed to reproduce the analysis that gave this result.

Now, a paper has been released that does reproduce the result: Herndon, Ash and Pollin’s Does High Public Debt Consistently Stifle Economic Growth? A Critique of Reinhart and Rogoff,

Except that it doesn’t, really. Herndon, Ash and Pollin identify three issues with Reinhart and Rogoff’s analysis, which mean that the result is not quite what it seems at first glance. It’s all to do with the weighted average that R&R use for the growth rates.

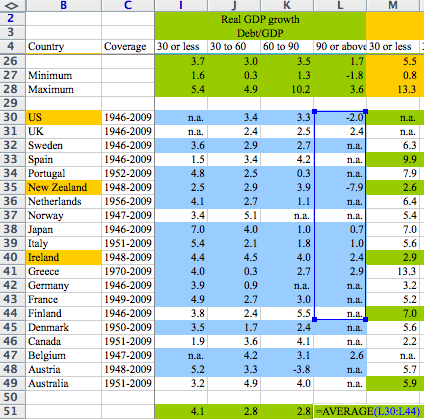

First, there are data sets for 20 countries covering the period 1946-2009. R&R exclude data for three countries for the first few years. It turns out that those three countries had high debt levels and solid growth in the omitted periods. R&R didn’t explain these exclusions.

Second, the weights for the averaging aren’t straightforward (or, possibly, they are too straightforward). Rortybomb has a good explanation:

Reinhart-Rogoff divides country years into debt-to-GDP buckets. They then take the average real growth for each country within the buckets. So the growth rate of the 19 years that the U.K. is above 90 percent debt-to-GDP are averaged into one number. These country numbers are then averaged, equally by country, to calculate the average real GDP growth weight.

In case that didn’t make sense, let’s look at an example. The U.K. has 19 years (1946-1964) above 90 percent debt-to-GDP with an average 2.4 percent growth rate. New Zealand has one year in their sample above 90 percent debt-to-GDP with a growth rate of -7.6. These two numbers, 2.4 and -7.6 percent, are given equal weight in the final calculation, as they average the countries equally. Even though there are 19 times as many data points for the U.K.

Third, there was an Excel error in the averaging. A formula omits five rows. Again, Rortybomb has a good picture:

Oops!

So, in summary, the weighted average omits some years, some countries, and isn’t weighted in the expected way. It doesn’t seem to me that any one of these is the odd man out, and I don’t think it really matters why either of the omissions occurred: in other words, I don’t think this is a major story about an Excel error.

I do think, though, that it’s an excellent example of something I’ve been worried about for some time: should you believe claims in published papers, when the claims are based on data analysis or modelling?

Let’s consider another, hypothetical, example. Someone’s modelled, say, the effects of differing capital levels on bank solvency in a financial crisis. There’s a beautifully argued paper, full of elaborate equations specifying interactions between this, that and the other. Everyone agrees that the equations are the bee’s knees, and appear to make sense. The paper presents results from running a model based on the equations. How do you know whether the model does actually implement all the spiffy equations correctly? By the way, I don’t think it makes any difference whether or not the papers are peer reviewed. It’s not my experience that peer reviewers check the code.

In most cases, you just can’t tell, and have to take the results on trust. This worries me. Excel errors are notorious. And there’s no reason to think that other models are error-free, either. I’m always finding bugs in people’s programs.

Transparency is really the only solution. Data should be made available, as should the source code of any models used. It’s not the full answer, of course, as there’s then the question of whether anyone has bothered to check the transparently provided information. And, if they have, what they can do to disseminate the results. Obviously for an influential paper like the R&R paper, any confirmation that the results are reproducible or otherwise is likely to be published itself, and enough people will be interested that the outcome will become widely known. But there’s no generally applicable way of doing it.

It’s good to test your software. That’s pretty much a given, as far as I’m concerned. If you don’t test it, you can’t tell whether it will work. It seems pretty obvious.

It also seems pretty obvious that a) you shouldn’t use test data in a live system, b) in order to test whether it’s doing the right thing, you have to know what the right thing is and c) your system should cope in reasonable ways with reasonably common situations.

If you use test data in a live system there’s a big risk that the test data will be mistaken for real data and give the wrong results to users. If you label all the test data as being different, or if it’s unlike real data in some other way, so that it can’t be confused with the real stuff, there’s a risk that the labelling will change the behaviour of the system, so the test becomes invalid. Because of this, most testing takes place before a system actually goes live. That’s all very well, unless a system’s outputs depend on the data that it’s used in the past. In that case you need to make sure that the actual system that goes live isn’t contaminated in any way by test data, otherwise you could, to take an example at random, accidentally downgrade France’s credit rating.

There’s a possibility that if you don’t have a full specification of a system, your testing will be incomplete. Well, it’s more of a certainty, really. This becomes an especial problem if you are buying a system (or component) in. If you don’t know exactly how it’s meant to behave in all circumstances, you can’t tell whether it’s working or not. It’s not really an answer just to try it out in a wide variety of situations, and assume it will behave the same way in similar situations in the future, because you don’t know precisely what differences might be significant and result in unexpected behaviours. The trouble is, the supplier may be concerned that a fully detailed specification might enable you to reverse engineer the bought-in system, and would thus endanger their intellectual property rights. There’s a theory that this might actually have happened with the Chinese high speed rail network, which has had some serious accidents in the last year or so.

It can’t be that uncommon that when people go online to enter an actual meter reading, because the estimated reading is wrong, the actual reading is less than the estimated one. In fact, that’s probably why most people bother to enter their readings. So assuming that meter has gone round the clock, through 9999 units, to get from the old reading to the new one, doesn’t seem like a good idea. The article explains the full story — you can only enter a reduced reading on the Southern Electric site within 2 weeks of the date of the original one. But the time limit isn’t made clear to users, and not getting round to something within 2 weeks is, in my experience, far from unusual. Some testing from the user point of view would surely have been useful.